Whenever I write a demo, I try to take screenshots of the work as it develops. There are a couple of reasons for doing this. Firstly, it’s nice to look back and see the progress. Secondly, sometimes I work myself into a creative dead-end with a scene and it can be good to look back and get some inspiration from earlier variations.

I’m trying to figure out how to improve my working methods and the screenshots things has always worked well for me so, while working on The Next Level, I made a conscience effort to take more screenshots than normal.

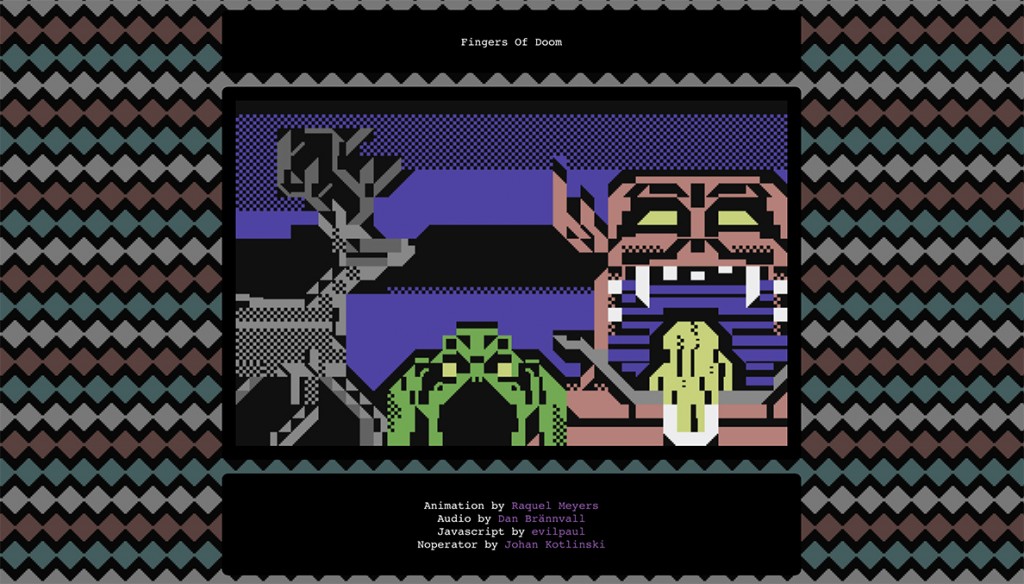

I love looking at other people’s work-in-progress shots. Since I now have a load of shots of my own demo, I thought it would be good to share some of them with you.

Engine

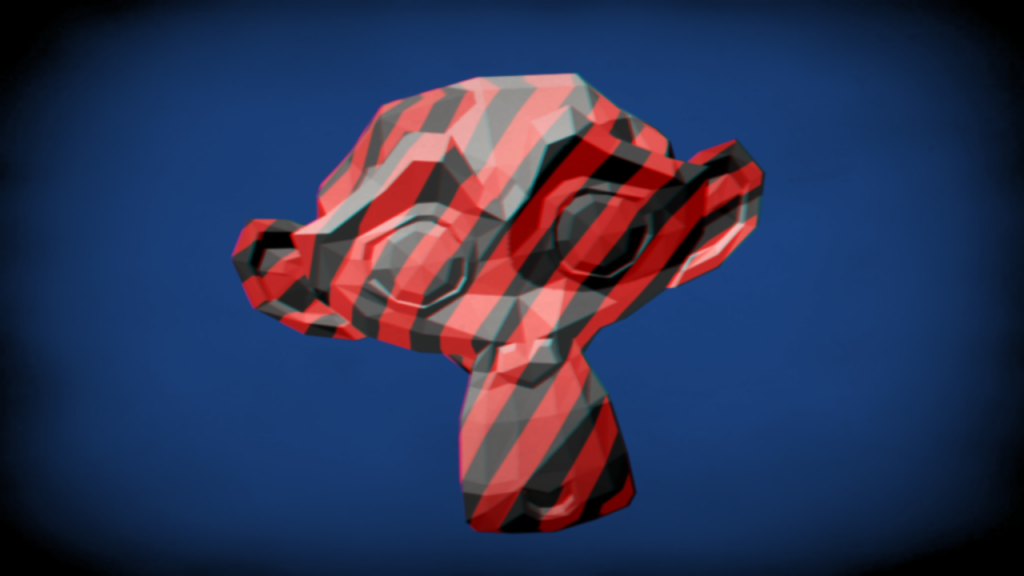

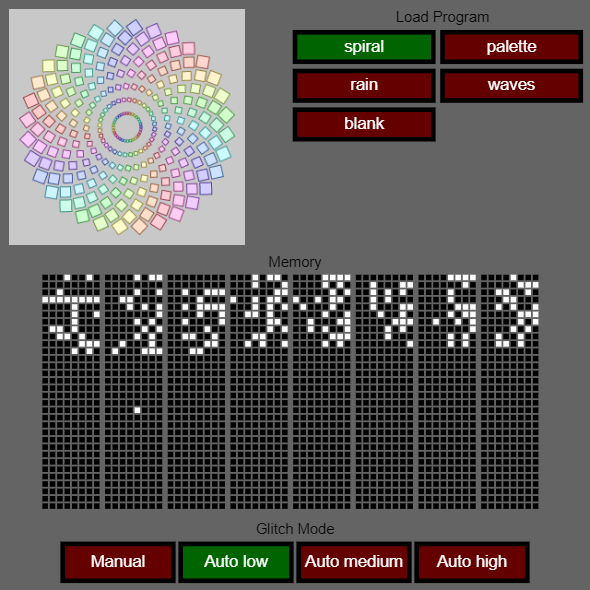

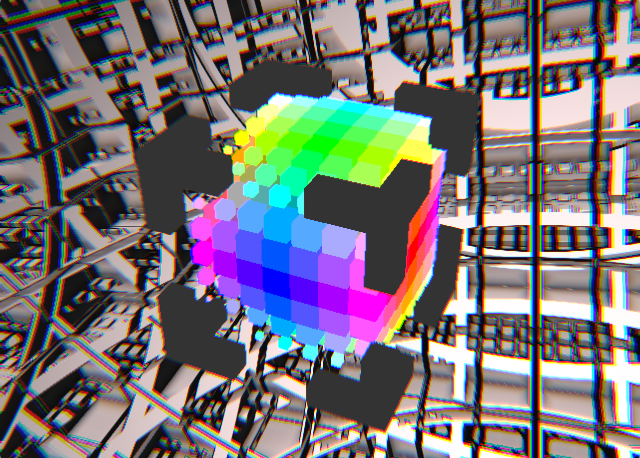

The engine itself was a development from the one used in 0xAnniversary. That demo was based around voxels so it only ever had to render cubes. This time I added model loading and animation:

Continue reading